Building a documentation framework for shoggoth-speak

Contents

Everybody knows that "conlanging", the hobby of creating languages, is infamously interminable - one is always rejiggering vocab, grammar, phonology, and the like. And yet, when the Muse sings, one invariably must answer her call. I knew I was toasted going in - my free time was as good as lost - but I had the foresight to create a tool which sped up the editing process rapidly. This article is about the creation of lugso.net, and the language there specified.

Part I: necessity is the black goat of the woods with a thousand young of invention🔗

Remember COVID? Those were some fun times. The best part of being a snail in an aquarium that you can't escape is the fact that you only have getting older to do every day - unless you make something up for yourself.

Around October of 2020, I did just that. After a couple of weeks of gaming together with my weekly group - which, in spite of social distancing, masking, yada yada, regulations, and the collapsing society around us, I kept meeting up with every single week in person, no masks, because each of us (on both sides of the aisle) agreed that masks were pointless - I decided that since we were playing Call of Cthulhu together, what we needed was a little extra something to make the experience more satisfying. So, I created a constructed language to evince in the minds of my players the effect of a Cthulhu cultist incanting eldritch spells. Maximum immersion!

But, as anybody who has ever even dabbled, conceived of dabbling, or planned to conceive of dabbling in constructed languages knows, the process of creating a language involves dozens, hundreds of revisions. And, aside from the initial idea that I had - which was that to create the effect in the minds of the players of something alien and strange, ululating, harmful, sibilant - I would only accept into the language consonants with the fricative property - that means f, s, sh, z, all of those classics of phonology who produce their noise by grinding two parts of your mouth together and kind of hissing a little bit, like a snake. And so, as the speakers of this language would enunciate their horrible tones, they would seem to be hissing, and fouling, writhing, and all that. So, that was a smashing success1 - but the way I got there was by creating a lot of words and rules and particles, which took lots and lots and lots of doing, over and over and over.

Now, why are there so many rules in a Cthulhu cultist language? Well, I wanted it to be hard to learn. Why are there so many fricatives - and so many strange fricatives at that, from odd languages, produced in hard-to-pronounce areas of the mouth and tongue? I wanted it to be hard to hear - to sound unnatural and weird. My players must be disturbed. (Also, I can't resist gimmicks like "Oops, all X!".)

With that out of the way, though, the problem with having lots of revisions is a problem of my own making. Why? Because I wanted to create a website that would teach people how to speak this language. Why did I want to create a website? Because, like all things which I think are cool, I wanted to share this with the world - and one day, maybe, if the stars are right and the Elder Gods are willing, to speak it and write a dissertation in it and get elected president and create an obelisk which will be the base of the national blood ritual... but that's getting a bit ahead of ourselves, isn't it?

So, the immediate need is to be able to create a website which somehow will not create extra work for me when I add a new word to the language, or I change what a word is, like the letters that are in a word or something like that. I need to be able to instantly propagate updates, from whatever language document I have, to this website. And here, in this post, is the story of how I did that.

I would eventually add some non-fricative consonants, like the glottal stop, and liquids, for effect. The spirit of the gimmick is still here, though.

Part II: retrieve data from the beyond🔗

Well, let's think about the data. Where does it live?

A dictionary is a list of words with arbitrary additional data attached to it. So, it seems obvious that I should have a spreadsheet for the dictionary. What about the phonology? Well, there's a list of sounds. That could be another sheet in the spreadsheet. (Eventually, I'd make an Awkwords2 file for the phonological inventory.) And the prose and the rules obviously have to be Markdown files of some kind. They'll be compiled together using something like Jekyll or GitHub Pages, which automatically handles all that hosting and rendering nonsense for me.

But you see, now we have the issue. Never the twain shall meet. You can't automatically pull data from a spreadsheet and populate the inside of a Markdown document unless you have some kind of script running. Furthermore, the other issue is that if you are writing, for example, flip-flop-the-glip-glop in your spreadsheet, then you have to write down flip-flop-the-glip-glop in your Markdown file. And then if you want to change it to zip-zop-the-tip-top, you have to change it in both places.

So we actually have to go and fetch the spreadsheet, and we have to use some kind of reference that is independent of the actual sounds of the word. And then, once we do that, we can replace it in the Markdown file with no effort. This is where the idea of the linguistic glossing abbreviation comes in.

Awkwords has been taken down since I last used it. Alternatives, proposed in the linked thread, are Lexifer and Langua (subtool "Gen").

Part III: linguistic gloss as quasi-objective store of meaning🔗

So the idea behind the linguistic gloss is that we pretend that when we type an English word - but we use a fancy acronym instead of the English word - we can attach that English word to a different word in a different language and somehow arrive at some kind of universal objective description of what's going on in the grammar of this non-English language.

Okay, that was a bit facetious of a description, but it is a bit funny to me how this universal format for encoding the meaning and syntax of any language anywhere is written in Latin letters, more or less all derived from English terms of linguistics. If somebody wanted to argue that English were the best language, they might employ an example like this in their debate - but that is not the point of this blog post, nor may it necessarily be the opinion of your humble author... just an observation.

The important thing about the linguistic gloss is that it is a highly compressed, efficient system of labeling the role and function of each morpheme - each unit of meaning - in an utterance, and boxing it within a certain known context of linguistic rules. So if I say "a participle" - PTCP - what kind of participle is it? Future, simple past, imperfect? PTCP.PST, or something. Okay, "past" means something obtained this condition in the past, and it inheres within it to the present. Furthermore, this linguistic nugget, which has been labeled with PTCP.PST, encodes that property to an arbitrary unit, and how much that generalizes is obviously up to the language itself. We can't encode all the rules of the language, just the meanings of the various chunks.3

So in English, the same grapheme, the letter "A", may mean something different if we're talking about the article versus the prefix. Sometimes it means a thing, one thing, anything - and at other times, it means "not". Like a-theist means "not theist", but a theist means "one theist". We can't write that difference in linguistic glosses except to simply write down the "indefinite article" (IND) versus the prefix "not" (NEG). The subtleties are not all captured by the gloss, but they do a good enough job at serving as an objective, unchanging reference point for the meaning of a particular utterance - which is why they were so useful to me in the creation of this language documentation.

See the list of glossing abbreviations for more, or check out an example from Lugso itself.

Part IV: the dumber the syntax, the happier the javascript🔗

So my goal was to create a language documentation framework, and it was going to rely on a dictionary of words which correspond to a pair of linguistic gloss and Lugso word. Now, unless I wanted to write a bunch of complicated inflection rules, these word entries had to not change depending on aspects of the sentence - which is to say, they couldn't be inflected. You might know conjugation or noun declension from other languages in the human world, which involve changing a sound to a different sound to show that a particular grammatical aspect is present in the morpheme. Lugso, for multiple reasons - I think aesthetic partially, but also pragmatic here - opted instead for an agglutinative model, where to change or modify the meaning of a term, you add something to it.

Think of the English word red, which is an adjective; redness, which is a noun; unredness, which is the opposite of the noun redness; and so on. Or help, which is a verb; helpful, which is an adjective; helpfully, which is an adverb; unhelpfully; and so on. In the examples that I've given, the pieces which we are assembling don't change. Contrast this with a different kind of English verb: sing, sang, sung. It changes a vowel on the inside of the word to indicate that something is different about the meaning of the verb.

I had to choose the former approach by virtue of having done this method - but also, I kind of liked this approach, because the robotic accumulation of additional hard-to-pronounce sounds was serving my purpose of creating an alien language spoken by cultists in conference with incomprehensible intelligences.

As for phonetics: Lugso only has a single syllable form, which is two optional consonants and a vowel followed by three optional consonants, and I didn't want to get too far into the transformative or optional possibilities of that space - both in the interests of pragmatism, and because I wanted to keep it simple enough to actually learn while also having the potential for getting really complicated. English can approach this asymptote with a word like strengths (CCCVCCC), but that's a bit of an outlier - and when that level of hard-to-pronounce-ness is the norm, you start getting into some much more unfriendly territory.

Part V: tech stack & template language🔗

So now, I could get down to business. I decided to host it on Github Pages using Jekyll for the static site and Gulp for the transformation function, which would intake Markdown files containing a very small domain-specific templating language. Within it was the ability for you to insert templating brackets with a prefix, which would change the behavior of the output text. Sometimes we want just to output Lugso. Sometimes we want Lugso on top of an English translation. Sometimes we want Lugso, English, and linguistic gloss. That, for now, was good enough. It looked like this:

${g: consume 1SG-ACC 2SG}$

The g prefix is the most verbose: it prints out a "pronounce button", the Lugso, and the gloss. Most other prefixes are subsets of this, but there's also d for debugging, and r for the entire dictionary row of the first word in the line, for example.

I spent a lot of time messing with the exact formatting and appearance of this, but "code text for the gloss, prose for the English, and a fancy-looking, different-colored text for the Lugso" was what I eventually ended up settling on - as long as it could embed directly into Markdown.

Part VI: "... He can talk!"🔗

lolvtu gu5li'ir 5ifzxi lhi no

ɮʌɮvθu ɣuʃɮiʔiɻ ʃifzxi ɮχi nʌ

I will praise the Lord at all times.

VI.A: restless on laurels🔗

So now, the tool is in a state where I can write out a gloss of the meaning of a sentence - or, you know, 64,000 sentences or whatever - and the Gulp file will go and retrieve the dictionary from Google Sheets using their API, look up the Lugso word for each corresponding gloss entry, concatenate them into words, and produce the markup for the documentation for the website.

The excellent part of all of this is: any time I make a word change in the dictionary, I just run the tool again and rebuild the site, and the documentation is automatically generated. All that was left was to write out all of the grammar rules.

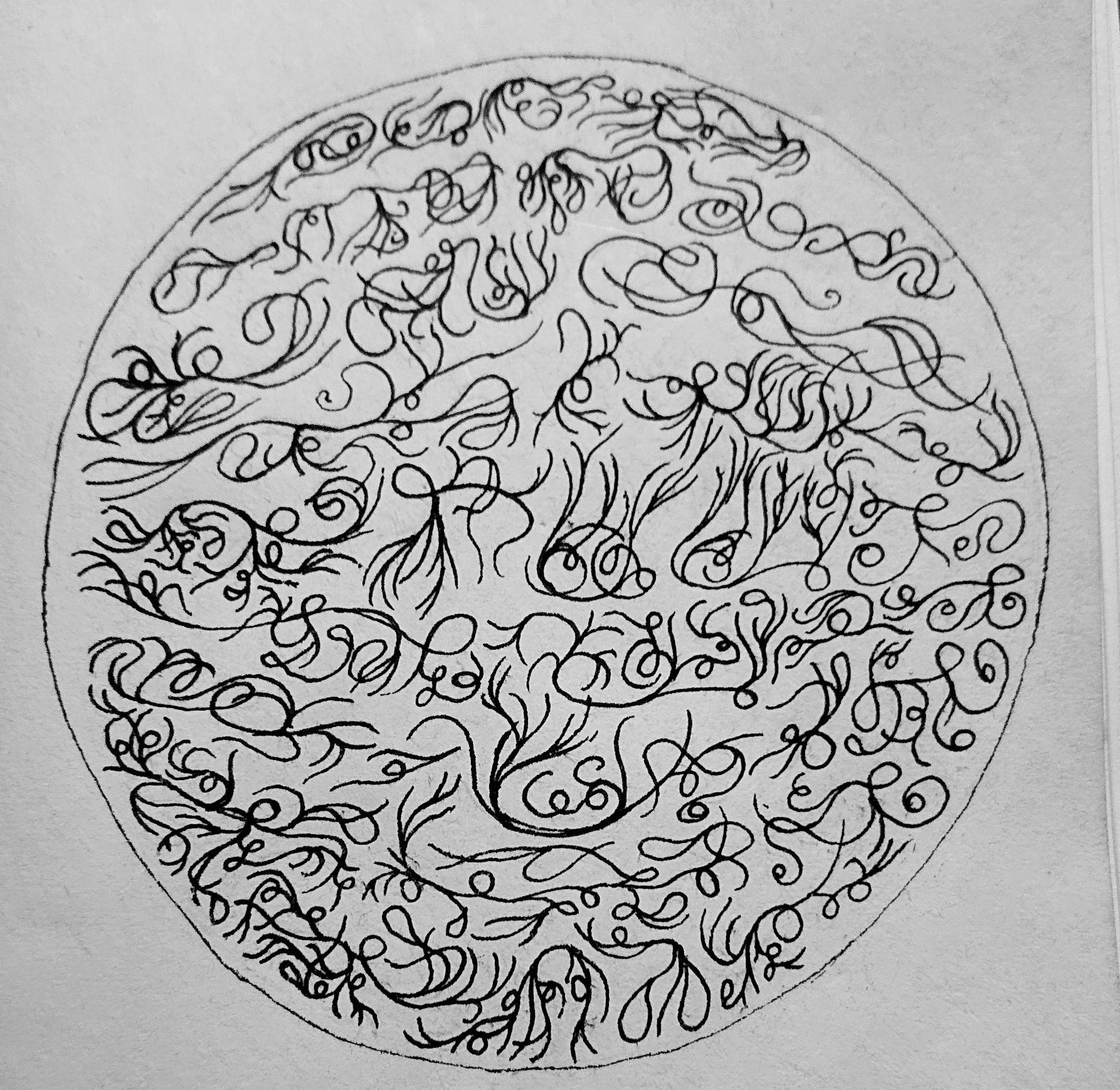

I then invented a writing system which resulted in these long, flowing, tentacular, abjad-like constructions that you can extend arbitrarily to create things that don't look like writing - they look more like ornamentation. But when you discover these teentaclees on a statue - you look at them closely - when you examine them, parse them out, it turns out that they actually encode meaning. Kind of an insanity-inducing event.

That took quite a bit of time. Quite a bit of time later, I was done.

Except I also spent a bit of extra effort on making the site look and feel just right and producing some visuals. I also added a very subtle visual effect with particles going across the screen, to catch by surprise anybody who happened to be looking at the website a little bit too closely.

For some time, I also experimented with alternative markup schemes where, in the Lugso text, I would insert a dot underneath each morpheme, so that somebody who is looking at a 16-character word would be able to quickly parse which individual subcomponents went into the makeup of that long agglutination. But it was difficult to do this work with just Unicode and have the end results not interfere with the look and feel of the language. I may revisit this at some point. I may do something with CSS. I may do all manner of things. But for now, that remains an unresolved line item for people who are trying to break into Lugso.

VI.B: speech begins🔗

However, this wasn't good enough for me. I wanted to let people who visited the website (and didn't know me in person) to experience the eldritch horror of hearing the language "spoken", which meant that I had to either spend hundreds of hours spitting into a microphone, or come up with some way of generating speech from this collection of highly irregular sounds that I had selected from the International Phonetic Alphabet.

This might have been easier than it was if I had just picked normal fricatives. But, I had trawled through the IPA and picked the weird ones - like the voiceless lateral, or the uvular. Oh, there were also the voiceless and voiced bilabials. And remember, I decided to include them because of the fact that they were so rare and difficult to parse for people, in order to imbue the language with this strange, unfamiliar quality - and also because I wanted a large phonemic base in order to relax a bit of the constraints on the side of the phonotactics. All that rarity meant that satisfactory synthetic solutions were extremely hard to find.

So, I was looking into how to produce synthesized speech using IPA characters, and I found this website, ipa-reader.com4. I wanted a way to programmatically generate this same voice, which intakes an arbitrary IPA string and outputs a sound.

The backend for the website, I discovered, was an Amazon Lambda using Polly - like the parrot. Polly intakes a string and it outputs an audio file. And this was perfect for me, except for the fact that it was somebody else's resource, and I had moral issues with charging somebody fractions of a cent without their consent.

So I sent them an email, and I told them that in the unlikely event that, after I publish the website, millions of people started using their service and running up a server bill for them, I would have been the party responsible. Thankfully, they were fine with footing the bill, because they correctly saw that even after I would release the website, no such popularity surge would be in evidence. With their blessing, I hooked up my server to call out to their server and generate eldritch enunciations on the fly.

All that was left to do was add the feature flag to the templating language, and then, throughout the website, everywhere you want, there's a little speaker button you can click - and the computer will say Cthulhu words at you.

Support Katie, the creator, on Ko-fi!

Part VII: what comes next🔗

All right, so now where do we actually go next? Well, as I was saying: after getting an influx of new learners, I would like to set up a community of people who will produce and consume content in this language in order to summon the Elder Gods.

Key to this will be creating a prompt, or perhaps a trained network - although I guess that latter one would be less good than a sufficiently powerful model with a sufficiently good prompt - to actually produce new Lugso utterances and to help train people in the speaking of the language.

Before that comes out, though, I think it would be responsible of me to crystallize a version of the language in a state that would be serviceable for large numbers of people. There's nothing wrong with adding new words, of course, and the noun compound system - which is inspired by Toki Pona - would also assist in the somewhat small dictionary being insufficient for some everyday tasks.

And although I have no problem with lexicalization of certain things - in fact, it's actively encouraged - I would like to avoid excessive lexicalization, because the compounds can get rather long and difficult to say, which would militate against ergonomics.

Once there are bots speaking, though, I think usage would ideally take off. And in a server where the bot can be hooked up - similar to, or perhaps identical to, the one that I mentioned in one of my previous blog posts, Jeeves - in a Lugso mode with a custom prompt, I think we're off to the races.

Since I'm recording this before publicly launching the blog, I've actually had the chance to come back to the Jeeves bot and add a mode for Lugso. I've passed in the abbreviated grammar rules and dictionary in the prompt and asked it to translate, which is something that I've been trying to make happen for years - since GPT2. For ages, it just wasn't happening - they'd hallucinate constantly, get words wrong, etc. I was clearly to blame, at least partially, for my lack of prompt science; but the models themselves simply didn't get it. At some point in the recent past couple of models, though, they finally did. The last models who couldn't speakl Lugso were GPT-4/Claude Sonnet 3.5. Opus 4.5 can, though. I wonder if they'd be able to manage a more inflectional language, but still - I had hoped for it; now we're here.

But a chatbot's not good enough for me. I also hooked up the voice synthesis to the aforementioned IPA speaking functionality, so that instead of using ElevenLabs, we produce the raw IPA sounds for the language and pipe that out into the Discord. It's not as nice as Jonathan Cecil's synthesized voice, but it's more accurate to the way that the language should sound.

ugonogl lugso ("home of the blood")🔗

To that end, I have created such a Discord server (fitting name, chaos is disorder) and hooked up Jeeves to it. I have disabled the ability for others to change his settings, but if you want to come in and play around with Lugso and do some learning, you are more than welcome.

The invite is open and will stay open until such time as the stars are right and Cthulhu eats all the faithful first.